Lockbit ransomware found

LockBit ransomware was recently identified by Darktrace's Cyber AI during a trial with a retail company in the US. After an initial foothold was established via a compromised administrative credential, internal reconnaissance, lateral movement, and encryption of files occurred simultaneously, allowing the ransomware to steamroll through the digital system in just a few hours.

This incident serves as the latest reminder that ransomware campaigns now move through organizations at a speed that far outpaces human responders, demonstrating the need for machine-speed Autonomous Response to contain the threat before damage is done.

Lockbit ransomware defined

First discovered in 2019, LockBit is a relatively new family of ransomware that quickly exploits commonly available protocols and tools like SMB and PowerShell. It was originally known as ‘ABCD’ due the filename extension of the encrypted files, before it started using the current .lockbit extension. Since those early beginnings, it has evolved into one of the most calamitous strains of malware to date, asking for an average ransom of around $40,000 per organization.

As cyber-criminals level up the speed and scale of their attacks, ransomware remains a critical concern for organizations across every industry. In the past 12 months, Darktrace has observed an increase of over 20% in ransomware incidents across its customer base. Attackers are constantly developing new threat variants targeting exploits, utilizing off-the-shelf tools, and profiting from the burgeoning Ransomware-as-a-Service (RaaS) business model.

How does LockBit work?

In a typical attack, a threat actor will spend days or weeks inside a system, manually screening for the best way to grind the victim’s business to a halt. This phase tends to expose multiple indicators of compromise such as command and control (C2) beaconing, which Darktrace AI identifies in real time.

LockBit, however, only requires the presence of a human for a number of hours, after which it propagates through a system and infects other hosts on its own, without the need for human oversight. Crucially, the malware performs reconnaissance and continues to spread during the encryption phase. This allows it to cause maximal damage faster than other manual approaches.

AI-powered defense is essential in fighting back against these machine-driven attacks, which have the capacity to spread at speed and scale, and often go undetected by signature-based security tools. Cyber AI augments human teams by not only detecting the subtle signs of a threat, but autonomously responding in seconds, quicker than any human can be expected to react.

Ransomware analysis: Breaking down a LockBit attack with AI

Figure 1: Timeline of attack on the infected host and the encryption host. The infected host was the device initially infected with LockBit, which then spread to the encryption host, the device which performed the encryption.

Initial compromise

The attack commenced when a cyber-criminal gained access to a single privileged credential – either through a brute-force attack on an externally facing device, as seen in previous LockBit ransomware attacks, or simply with a phishing email. With the use of this credential, the device was able to spread and encrypt files within hours of the initial infection.

Had the method of infiltration been via phishing attack, a route that has become increasingly popular in recent months, Darktrace/ EMAIL would have withheld the email and stripped the malicious payloads, and so prevented the attack from the outset.

Limiting permissions, the use of strong passwords, and multi-factor authentication (MFA), are critical in preventing the exploitation of standard network protocols in such attacks.

Internal reconnaissance

At 14:19 local time, the first of many WMI commands (ExecMethod) to multiple internal destinations was performed by an internal IP address over DCE-RPC. This series of commands occurred throughout the encryption process. Given these commands were unusual in the context of the normal ‘pattern of life’ for the organization, Darktrace DETECT alerted the security team to each of these connections.

Within three minutes, the device had started to write executable files over SMB to hidden shares on multiple destinations – many of which were the same. File writes to hidden shares are ordinarily restricted. However, the unauthorized use of an administrative credential granted these privileges. The executable files were written to the Windows / Temp directory. Filenames had a similar formatting: .*eck[0-9]?.exe

Darktrace identified each of these SMB writes as a potential threat, since such administrative activity was unexpected from the compromised device.

The WMI commands and executable file writes continued to be made to multiple destinations. In less than two hours, the ExecMethod command was delivered to a critical device – the ‘encryption host’ – shortly followed by an executable file write (eck3.exe) to its hidden c$ share.

LockBit’s script has the capability to check its current privileges and, if non-administrative, it attempts to bypass using Windows User Account Control (UAC). This particular host did provide the required privileges to the process. Once this device was infected, encryption began.

File encryption

Only one second after encryption had started, Darktrace alerted on the unusual file extension appendage in addition to the previous, high-fidelity alerts for earlier stages of the attack lifecycle.

A recovery file – ‘Restore-My-Files.txt’ – was identified by Darktrace one second after the first encryption event. 8,998 recovery files were written, one to each encrypted folder.

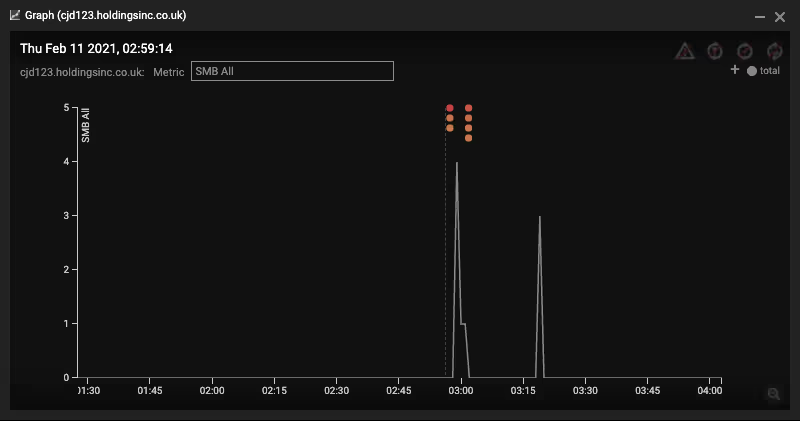

The encryption host was a critical device that regularly utilized SMB. Exploiting SMB is a popular tactic for cyber-criminals. Such tools are so frequently used that it is difficult for signature-based detection methods to identify quickly whether their activity is malicious or not. In this case, Darktrace’s ‘Unusual Activity’ score for the device was elevated within two seconds of the first encryption, indicating that the device was deviating from its usual pattern of behavior.

Throughout the encryption process, Darktrace also detected the device performing network reconnaissance, enumerating shares on 55 devices (via srvsvc) and scanning over 1,000 internal IP addresses on nine critical TCP ports.

During this time, ‘Patient Zero’ – the initially infected device – continued to write executable files to hidden file shares. LockBit was using the initial device to spread the malware across the digital estate, while the ‘encryption host’ performed reconnaissance and encrypted the files simultaneously.

Despite Cyber AI detecting the threat even before the encryption had begun, the security team did not have eyes on Darktrace at the time of the attack. The intrusion was thus allowed to continue and over 300,000 files were encrypted and appended with the .lockbit extension. Four servers and 15 desktop devices were affected, before the attack was stopped by the administrators.

The rise of ‘hit and run’ ransomware

While most ransomware resides inside an organization for days or weeks, LockBit’s self-governing nature allows the attacker to ‘hit and run’, deploying the ransomware with minimal interaction required after the initial intrusion. The ability to detect anomalous activity across the entire digital infrastructure in real time is therefore crucial in LockBit’s prevention.

WMI and SMB are relied upon by the vast majority of companies around the world, and yet they were utilized in this attack to propagate through the system and encrypt hundreds of thousands of files. The prevalence and volume of these connections make them near-impossible to monitor with humans or signature-based detection techniques alone.

Moreover, the uniqueness of every enterprise’s digital estate impedes signature-based detection from effectively alerting on internal connections and the volume of such connections. Darktrace, however, uses machine learning to understand the individual pattern of behavior for each device, in this case allowing it to highlight the unusual internal activity as it occurred.

The organization involved did not have Darktrace’s Autonomous Response technology configured in active mode. If enabled, i would have surgically blocked the initial WMI operations and SMB drive writes that triggered the attack whilst allowing the critical network devices to continue standard operations. Even if the foothold had been established, D would have enforced the ‘pattern of life’ of the encryption host, preventing the cascade of encryption over SMB. This demonstrates the importance of meeting machine-speed attacks with autonomous cyber security, which reacts in real time to sophisticated threats when human security teams cannot.

LockBit has the ability to encrypt thousands of files in just seconds, even when targeting well-prepared organizations. This type of ransomware, with built-in worm-like functionality, is expected to become increasingly common over 2021. Such attacks can move at a speed which no human security team alone can match. Darktrace’s approach, which uses unsupervised machine learning, can respond in seconds to these rapid attacks and shut them down in their earliest stages.

Thanks to Darktrace analyst Isabel Finn for her insights on the above threat find.

Darktrace model detections:

- Device / New or Uncommon WMI Activity

- Compliance / SMB Drive Write

- Compromise / Ransomware / Suspicious SMB Activity

- Compromise / Ransomware / Ransom or Offensive Words Written to SMB

- Anomalous File / Internal / Additional Extension Appended to SMB File

- Anomalous Connection / SMB Enumeration

- Device / Network Scan – Low Anomaly Score

- Anomalous Connection / Sustained MIME Type Conversion

- Anomalous Connection / Suspicious Read Write Ratio

- Unusual Activity / Sustained Anomalous SMB Activity

- Device / Large Number of Model Breaches

%201.png)