It’s ten to five on a Friday afternoon. A technician has come in to perform a routine check on an electronic door. She enters the office with no issues – she works for a trusted third-party vendor, employees see her every week. She opens her laptop and connects to the Door Access Control Unit, a small Internet of Things (IoT) device used to operate the smart lock. Minutes later, trojans have been downloaded onto the company network, a crypto-mining operation has begun, and there is evidence of confidential data being exfiltrated. Where did things go wrong?

Threats in a business: A new dawn surfaces

As organizations keep pace with the demands of digital transformation, the attack surface has become broader than ever before. There are numerous points of entry for a cyber-criminal – from vulnerabilities in IoT ecosystems, to blind spots in supply chains, to insiders misusing their access to the business. Darktrace sees these threats every day. Sometimes, like in the real-world example above, which will be examined in this blog, they can occur in the very same attack.

Insider threats can use their familiarity and level of access to a system as a critical advantage when evading detection and launching an attack. But insiders don’t necessarily have to be malicious. Every employee or contractor is a potential threat: clicking on a phishing link or accidentally releasing data often leads to wide-scale breaches.

At the same time, connectivity in the workspace – with each IoT device communicating with the corporate network and the Internet on its own IP address – is an urgent security issue. Access control systems, for example, add a layer of physical security by tracking who enters the office and when. However, these same control systems imperil digital security by introducing a cluster of sensors, locks, alarm systems, and keypads, which hold sensitive user information and connect to company infrastructure.

Furthermore, a significant proportion of IoT devices are built without security in mind. Vendors prioritize time-to-market and often don’t have the resources to invest in baked-in security measures. Consider the number of start-ups which manufacture IoT – over 60% of home automation companies have fewer than ten employees.

Insider threat detected by Cyber AI

In January 2021, a medium-sized North American company suffered a supply chain attack when a third-party vendor connected to the control unit for a smart door.

Figure 1: The attack lasted 3.5 hours in total, commencing 16:50 local time.

The technician from the vendor’s company had come in to perform scheduled maintenance. They had been authorized to connect directly to the Door Access Control Unit, yet were unaware that the laptop they were using, brought in from outside of the organization, had been infected with malware.

As soon as the laptop connected with the control unit, the malware detected an open port, identified the vulnerability, and began moving laterally. Within minutes, the IoT device was seen making highly unusual connections to rare external IP addresses. The connections were made using HTTP and contained suspicious user agents and URIs.

Darktrace then detected that the control unit was attempting to download trojans and other payloads, including upsupx2.exe and 36BB9658.moe. Other connections were used to send base64 encoded strings containing the device name and the organization’s external IP address.

Cryptocurrency mining activity with a Monero (XMR) CPU miner was detected shortly afterwards. The device also utilized an SMB exploit to make external connections on port 445 while searching for vulnerable internal devices using the outdated SMBv1 protocol.

One hour later, the device connected to an endpoint related to the third-party remote access tool TeamViewer. After a few minutes, the device was seen uploading over 15 MB to a 100% rare external IP.

Figure 2: Timeline of the connections made by an example device on the days around an incident (blue). The connections associated with the compromise are a significant deviation from the device’s normal pattern of life, and result in multiple unusual activity events and repeated model breaches (orange).

Security threats in the supply chain

Cyber AI flagged the insider threat to the customer as soon as the control unit had been compromised. The attack had managed to bypass the rest of the organization’s security stack, for the simple reason that it was introduced directly from a trusted external laptop, and the IoT device itself was managed by the third-party vendor, so the customer had little visibility over it.

Traditional security tools are ineffective against supply chain attacks such as this. From the SolarWinds hack to Vendor Email Compromise, 2021 has put the nail in the coffin for signature-based security – proving that we cannot rely on yesterday’s attacks to predict tomorrow’s threats.

International supply chains and the sheer number of different partners and suppliers which modern organizations work with thus pose a serious security dilemma: how can we allow external vendors onto our network and keep an airtight system?

The first answer is zero-trust access. This involves treating every device as malicious, inside and outside the corporate network, and demanding verification at all stages. The second answer is visibility and response. Security products must shed a clear light into cloud and IoT infrastructure, and react autonomously as soon as subtle anomalies emerge across the enterprise.

IoT investigated

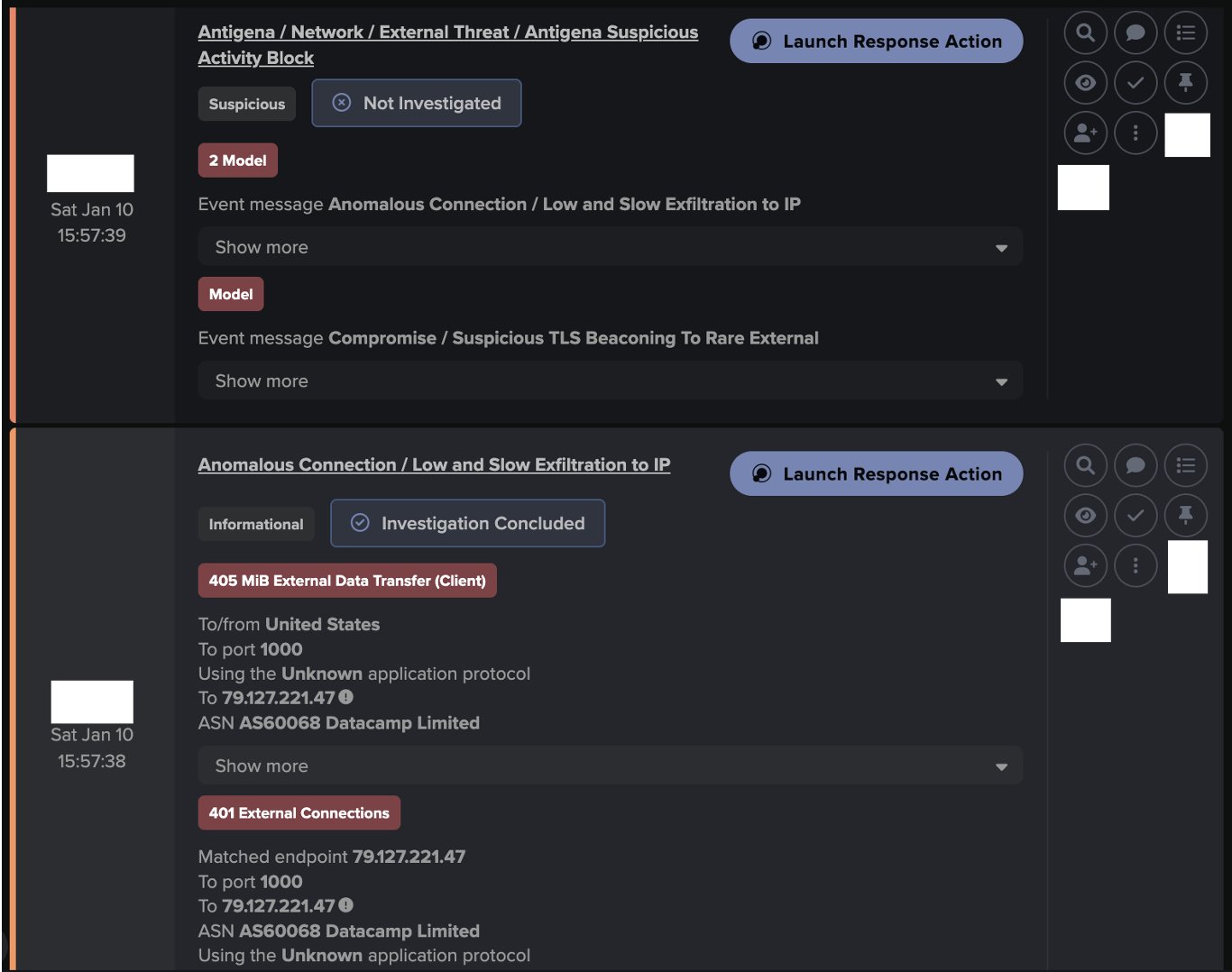

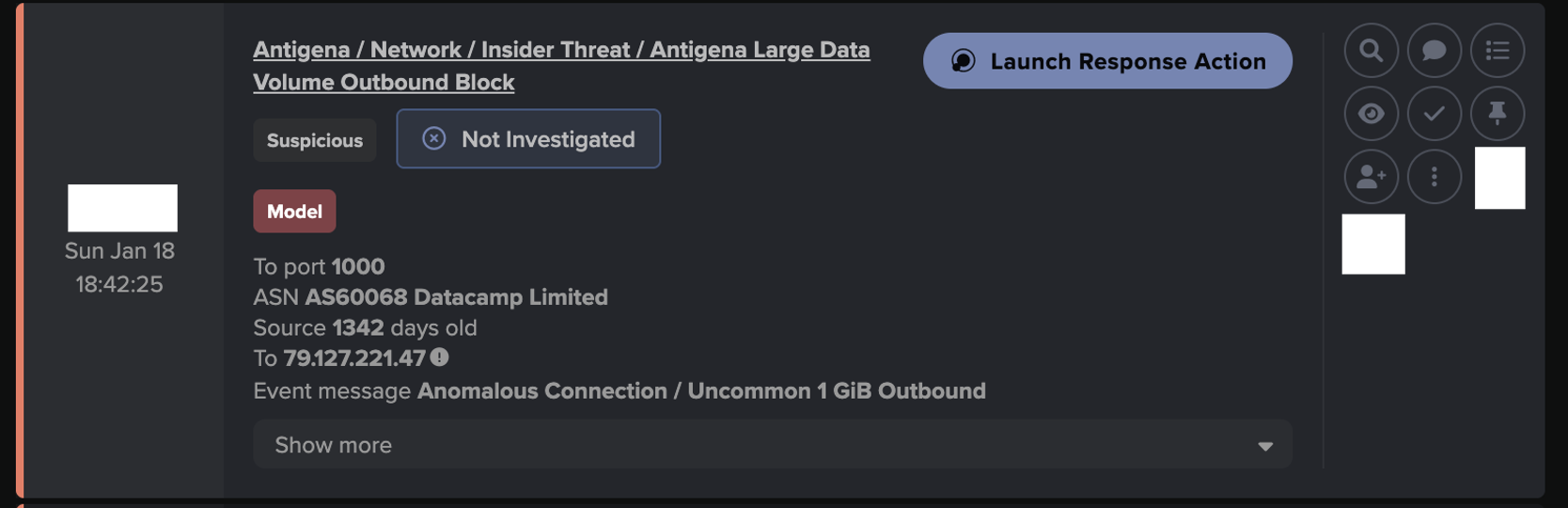

Darktrace’s Cyber AI Analyst reported on every stage of the attack, including the download of the first malicious executable file.

Figure 3: Example of Cyber AI Analyst detecting anomalous behavior on a device, including C2 connectivity and suspicious file downloads.

Cyber AI Analyst investigated the C2 connectivity, providing a high-level summary of the activity. The IoT device had accessed suspicious MOE files with randomly generated alphanumeric names.

Figure 4: A Cyber AI Analyst summary of C2 connectivity for a device.

Not only did the AI detect every stage of the activity, but the customer was also alerted via a Proactive Threat Notification following a high scoring model breach at 16:59, just minutes after the attack had commenced.

Stranger danger

Third parties coming in to tweak device settings and adjust the network can have unintended consequences. The hyper-connected world which we’re living in, with the advent of 5G and Industry 4.0, has become a digital playground for cyber-criminals.

In the real-world case study above, the IoT device was unsecured and misconfigured. With rushed creations of IoT ecosystems, intertwining supply chains, and a breadth of individuals and devices connecting to corporate infrastructure, modern-day organizations cannot expect simple security tools which rely on pre-defined rules to stop insider threats and other advanced cyber-attacks.

The organization did not have visibility over the management of the Door Access Control Unit. Despite this, and despite no prior knowledge of the attack type or the vulnerabilities present in the IoT device, Darktrace detected the behavioral anomalies immediately. Without Cyber AI, the infection could have remained on the customer’s environment for weeks or months, escalating privileges, silently crypto-mining, and exfiltrating sensitive company data.

Thanks to Darktrace analyst Grace Carballo for her insights on the above threat find.

Learn more about insider threats

Darktrace model detections:

- Anomalous File/Anomalous Octet Stream

- Anomalous Connection/New User Agent to IP Without Hostname

- Unusual Activity/Unusual External Connectivity

- Device/Increased External Connectivity

- Anomalous Server Activity/Outgoing from Server

- Device/New User Agent and New IP

- Compliance/Cryptocurrency Mining Activity

- Compliance/External Windows Connectivity

- Anomalous File/Multiple EXE from Rare External Locations

- Anomalous File/EXE from Rare External Location

- Device/Large Number of Model Breaches

- Anomalous File/Internet Facing System File Download

- Device/Initial Breach Chain Compromise

- Device/SMB Session Bruteforce

- Device/Network Scan- Low Anomaly Score

- Device/Large Number of Connections to New Endpoint

- Anomalous Server Activity/Outgoing from Server

- Compromise/Beacon to Young Endpoint

- Anomalous Server Activity/Rare External from Server

- Device/Multiple C2 Model Breaches

- Compliance/Remote Management Tool on Server

- Anomalous Connection/Data Sent to New External Device

![Initial Beaconing to Young Endpoint alert behavior, involving the known tunnel/proxy endpoint ‘79.127.221[.]47’.](https://cdn.prod.website-files.com/626ff4d25aca2edf4325ff97/6a0b527a7ecc78423d4fdbc6_Screenshot%202026-05-18%20at%2010.55.03%E2%80%AFAM.png)

![Darktrace observed TLS beaconing alerts to the known trojanized installer, update[.]7zip[.]com · 98.96.229[.]19, over port 443 on January 7th.](https://cdn.prod.website-files.com/626ff4d25aca2edf4325ff97/6a0b529557224d073e49becd_Screenshot%202026-05-18%20at%2010.55.29%E2%80%AFAM.png)

![On January 8th, Darktrace observed SSL beaconing to a rare destination which was attributed to a known ‘config/control domain’, nova[.]smshero[.]ai.](https://cdn.prod.website-files.com/626ff4d25aca2edf4325ff97/6a0b52ca6bdc2e2e6451446a_Screenshot%202026-05-18%20at%2010.56.22%E2%80%AFAM.png)

![Darktrace later observed continued beaconing alerts over a 4-day interval to additional rare destinations attributed to a known ‘config/control domain’, zest[.]hero-sms[.]ai & glide[.]smshero[.]cc.](https://cdn.prod.website-files.com/626ff4d25aca2edf4325ff97/6a0b52eed2e7185a03c382e7_Screenshot%202026-05-18%20at%2010.56.59%E2%80%AFAM.png)