When considering email security, IT teams have historically had to choose between excluding employees entirely, or including them but giving them too much power and implementing unenforceable, trust-based policies that try to make up for it.

However, just because email security should not rely on employees, this does not mean they should be excluded entirely. Employees are the ones interacting with emails daily, and their experiences and behaviors can provide valuable security insights and even influence productivity.

AI technology supports employee engagement in this non-intrusive, nuanced way to not only maintain email security, but also enhance it.

Finding a Balance of Employee Involvement in Security Strategies

Historically, security solutions offered ‘all or nothing’ approaches to employee engagement. On one hand, when employees are involved, they are unreliable. Employees cannot all be experts in security on top of their actual job responsibilities, and mistakes are bound to happen in fast-paced environments.

Although there have been attempts to raise security awareness, they often have shortcomings, as training emails lack context and realism, leaving employees with poor understandings that often lead to reporting emails that are actually safe. Having users constantly triaging their inboxes and reporting safe emails wastes time that takes away from their own productivity as well as the productivity of the security team.

Other historic forms of employee involvement also put security at risk. For example, users could create blanket rules through feedback, which could lead to common problems like safe-listing every email that comes from the gmail.com domain. Other times, employees could choose for themselves to release emails without context or limitations, introducing major risks to the organization. While these types of actions include employees to participate in security, they do so at the cost of security.

Even lower stakes employee involvement can prove ineffective. For example, excessive warnings when sending emails to external contacts can lead to banner fatigue. When employees see the same warning message or alert at the top of every message, it’s human nature that they soon become accustomed and ultimately immune to it.

On the other hand, when employees are fully excluded from security, an opportunity is missed to fine-tune security according to the actual users and to gain feedback on how well the email security solution is working.

So, both options of historically conventional email security, to include or exclude employees, prove incapable of leveraging employees effectively. The best email security practice strikes a balance between these two extremes, allowing more nuanced interactions that maintain security without interrupting daily business operations. This can be achieved with AI that tailors the interactions specifically to each employee to add to security instead of detracting from it.

Reducing False Reports While Improving Security Awareness Training

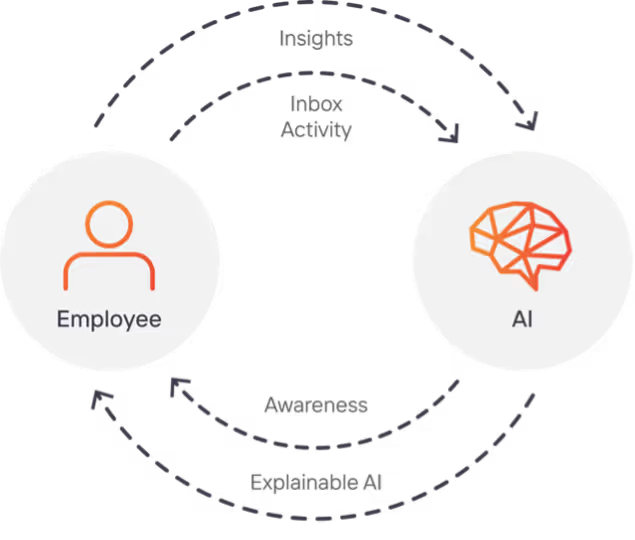

Humans and AI-powered email security can simultaneously level up by working together. AI can inform employees and employees can inform AI in an employee-AI feedback loop.

By understanding ‘normal’ behavior for every email user, AI can identify unusual, risky components of an email and take precise action based on the nature of the email to neutralize them, such as rewriting links, flattening attachments, and moving emails to junk. AI can go one step further and explain in non-technical language why it has taken a specific action, which educates users. In contrast to point-in-time simulated phishing email campaigns, this means AI can share its analysis in context and in real time at the moment a user is questioning an email.

The employee-AI feedback loop educates employees so that they can serve as additional enrichment data. It determines the appropriate levels to inform and teach users, while not relying on them for threat detection.

In the other direction, the AI learns from users’ activity in the inbox and gradually factors this into its decision-making. This is not a ‘one size fits all’ mechanism – one employee marking an email as safe will never result in blanket approval across the business – but over time, patterns can be observed and autonomous decision-making enhanced.

The employee-AI feedback loop draws out the maximum potential benefits of employee involvement in email security. Other email security solutions only consider the security team, enhancing its workflow but never considering the employees that report suspicious emails. Employees who try to do the right thing but blindly report emails never learn or improve and end up wasting their own time. By considering employees and improving security awareness training, the employee-AI feedback loop can level up users. They learn from the AI explanations how to identify malicious components, and so then report fewer emails but with greater accuracy.

While AI programs have classically acted like black boxes, Darktrace trains its AI on the best data, the organization’s actual employees, and invites both the security team and employees to see the reasoning behind its conclusions. Over time, employees will trust themselves more as they better learn how to discern unsafe emails.

Leveraging AI to Generate Productivity Gains

Uniquely, AI-powered email security can have effects outside of security-related areas. It can save time by managing non-productive email. As the AI constantly learns employee behavior in the inbox, it becomes extremely effective at detecting spam and graymail – emails that aren't necessarily malicious, but clutter inboxes and hamper productivity. It does this on a per-user basis, specific to how each employee treats spam, graymail, and newsletters. The AI learns to detect this clutter and eventually learns which to pull from the inbox, saving time for the employees. This highlights how security solutions can go even further than merely protecting the email environment with a light touch, to the point where AI can promote productivity gains by automating tasks like inbox sorting.

Preventing Email Mishaps: How to Deal with Human Error

Improved user understanding and decision making cannot stop natural human error. Employees are bound to make mistakes and can easily send emails to the wrong people, especially when Outlook auto-fills the wrong recipient. This can have effects ranging anywhere from embarrassing to critical, with major implications on compliance, customer trust, confidential intellectual property, and data loss.

However, AI can help reduce instances of accidentally sending emails to the wrong people. When a user goes to send an email in Outlook, the AI will analyze the recipients. It considers the contextual relationship between the sender and recipients, the relationships the recipients have with each other, how similar each recipient’s name and history is to other known contacts, and the names of attached files.

If the AI determines that the email is outside of a user’s typical behavior, it may alert the user. Security teams can customize what the AI does next: it can block the email, block the email but allow the user to override it, or do nothing but invite the user to think twice. Since the AI analyzes each email, these alerts are more effective than consistent, blanket alerts warning about external recipients, which often go ignored. With this targeted approach, the AI prevents data leakage and reduces cyber risk.

Since the AI is always on and continuously learning, it can adapt autonomously to employee changes. If the role of an employee evolves, the AI will learn the new normal, including common behaviors, recipients, attached file names, and more. This allows the AI to continue effectively flagging potential instances of human error, without needing manual rule changes or disrupting the employee’s workflow.

Email Security Informed by Employee Experience

As the practical users of email, employees should be considered when designing email security. This employee-conscious lens to security can strengthen defenses, improve productivity, and prevent data loss.

In these ways, email security can benefit both employees and security teams. Employees can become another layer of defense with improved security awareness training that cuts down on false reports of safe emails. This insight into employee email behavior can also enhance employee productivity by learning and sorting graymail. Finally, viewing security in relation to employees can help security teams deploy tools that reduce data loss by flagging misdirected emails. With these capabilities, Darktrace/Email™ enables security teams to optimize the balance of employee involvement in email security.

%201.png)

.jpg)