“The next phase in our journey toward autonomous security is Autonomous Response decision-making.”

Lawrence Pingree, Research Vice President, Gartner

We’ve talked extensively on this blog about Autonomous Response: the AI-powered technology that, according to Gartner, represents a paradigm shift in cyber defense. As the first such Autonomous Response tool, Darktrace Antigena has already thwarted countless cyber-attacks, from a spear phishing campaign against a major city to an IoT smart locker attack targeting a popular amusement park. Antigena’s surgical intervention afforded their security teams the time they needed to investigate — stopping the clock in seconds by containing just the malicious behavior.

For all its benefits, however, Autonomous Response does have one drawback: it can make for slightly anticlimactic blog posts. In place of captivating, step-by-step descriptions of malware spreading throughout the enterprise and inflicting irrevocable damage, Antigena case studies end a mere moment after they start, with the “patient zero” employee completely unaware of the compromise that could have been.

In this particular case, however, Antigena was deployed in Human Confirmation Mode — a starter mode wherein the AI’s actions must first be approved by the security team. Absent such approval, the result was both an in-depth look at a sophisticated ransomware attack, as well as a remarkable illustration of how Antigena reacted in real time to every stage of that attack’s lifecycle:

Initial download

Patient zero here was a device that Darktrace detected downloading an executable file from a server with which no other devices on the network had ever communicated. Downloads like this one regularly bypass conventional endpoint tools, since they cannot be programmed in advance to catch the full range of unpredictable future threats. By contrast, because Darktrace AI learned the typical behavior of the company’s unique users and devices while ‘on the job’, it easily determined the download to be anomalous.

Had Antigena been in Active Mode at the time, this would have marked the end of the blog post. By blocking all connections to the associated IP and port, Antigena would have instantly stopped the download — without otherwise impacting the device at all.

Command and control

Following the download, Darktrace observed the device making an HTTP GET request to the same rare endpoint. The continuation of this suspicious activity precipitated an escalation in Antigena’s recommended response, which would now have blocked all outgoing traffic from the breached device to prevent any infection from spreading.

Darktrace then detected the device making yet more unusual external connections to endpoints that, in many cases, had self-signed SSL certificates. Such self-signed certificates do not require verification by a trusted authority and are therefore frequently utilized by cyber-criminals. As a consequence, the outgoing connections from our infected device are likely the installed malware communicating with its command and control infrastructure, as Darktrace flagged below:

Figure 3: Darktrace alerts on the suspicious SSL certificates.

Figure 4: Antigena recommends taking action to block the connections in question.

Internal reconnaissance

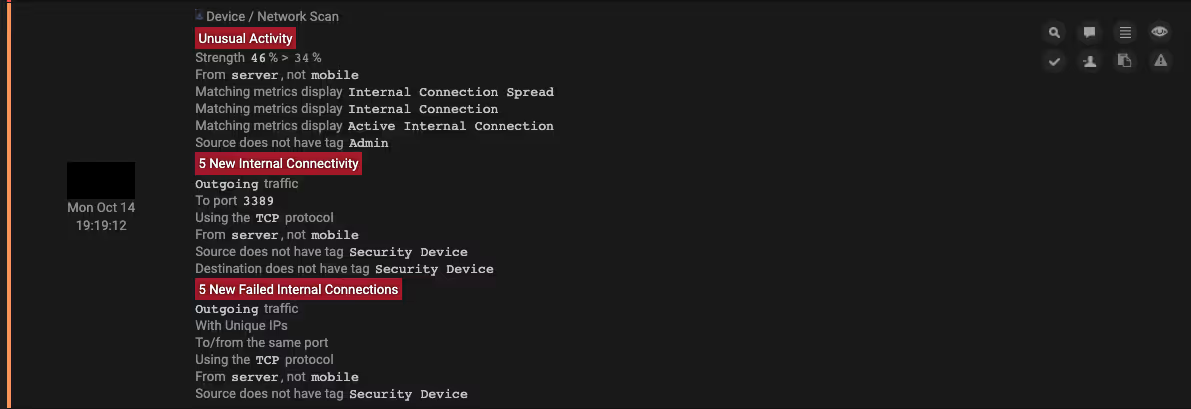

Beyond the unusual external activity observed from the breached device, it also began to deviate significantly from its typical pattern of internal behavior. Indeed, Darktrace detected the device making over 160,000 failed internal connections on two key ports: Remote Desktop Protocol port 3389 and SMB port 445. This activity — known as network scanning — provides crucial reconnaissance, giving the attacker insight into the network structure, the services available on each device, and any potential vulnerabilities. Ports 3389 and 445 are especially common targets.

The unusual external connections to self-signed SSL certificates, combined with the highly anomalous internal connectivity from the device, would have caused Antigena to escalate further. Alas, the attack proceeds.

Darktrace detected no further anomalous activity from patient zero for the next four days — perhaps a mechanism to remain under the radar. Yet this period of dormancy concluded when, once again, the device connected to a rare domain with a self-signed SSL certificate, likely reaching out to its command and control infrastructure for additional instructions.

Lateral movement

A day later — in a sign that suggests the prior scanning was somewhat fruitful — the infected device performed a large amount of unusual SMB activity consistent with the malware attempting to move laterally across the network. Darktrace picked up on the breached device sending unusual outgoing SMB writes to the remote administration tool PsExec to a total of 38 destination devices, 28 of which it compromised with a malicious file.

Darktrace recognized this activity as highly anomalous for the particular device, as it doesn’t usually communicate with these destination devices in this manner. Antigena would therefore would have surgically blocked the remote administration behavior by first containing the patient zero device to its normal ‘pattern of life’, and then by escalating to blocking all outgoing connections from the device if lateral movement had continued. Antigena’s escalation can be seen below: the first action is taken at 08:03, the second, more severe action at 08:43.

Encryption

Darktrace observed the first sign of the ransomware’s ultimate objective — encrypting files — on a different device, which also performed a large volume of unusual SMB activity. After accessing a multitude of SMB shares that it hadn’t accessed previously, it systematically appended those files with the .locked extension. When all was said and done, this encryption activity was seen from no less than 40 internal devices.

In Active Mode, Antigena Ransomware Block would have fully quarantined the devices — a culmination of increasingly severe Antigena actions from the initial infection of patient zero, to the command and control communication, to the internal reconnaissance, to the lateral movement, and finally to the file encryption.

Figure 8: Antigena Ransomware Block was fully armed and prepared to fight back against the infection.

The case for boring blog posts

No other approach to cyber security is able to track ransomware so comprehensively throughout its lifecycle, as programming legacy tools to flag all remote administration behavior, for instance, would inundate security teams with thousands of false positive alerts. Thus, only Darktrace’s understanding ‘self’ for each infected device can shed light on such activities — in the rare cases when they are anomalous.

However, intriguing though it may be to track this lifecycle to conclusion, the technology to write far less intriguing blog posts already exists and is already proven. Autonomous Response will render this kind of threat story a relic of the past, and for organizations with sensitive data and critical intellectual property to safeguard, the days of boring security blogs cannot come soon enough.

%201.png)