Out of all the services honeypotted by Darktrace, Docker is the most commonly attacked, with new strains of malware emerging daily. This blog will analyze a novel malware campaign with a unique obfuscation technique and a new cryptojacking technique.

What is obfuscation?

Obfuscation is a common technique employed by threat actors to prevent signature-based detection of their code, and to make analysis more difficult. This novel campaign uses an interesting technique of obfuscating its payload.

Docker image analysis

The attack begins with a request to launch a container from Docker Hub, specifically the kazutod/tene:ten image. Using Docker Hub’s layer viewer, an analyst can quickly identify what the container is designed to do. In this case, the container is designed to run the ten.py script which is built into itself.

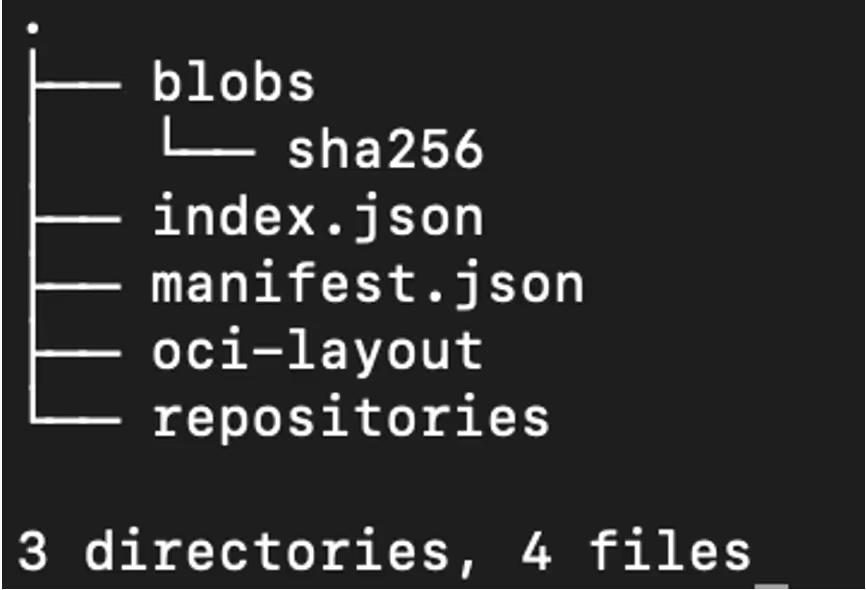

To gain more information on the Python file, Docker’s built in tooling can be used to download the image (docker pull kazutod/tene:ten) and then save it into a format that is easier to work with (docker image save kazutod/tene:ten -o tene.tar). It can then be extracted as a regular tar file for further investigation.

The Docker image uses the OCI format, which is a little different to a regular file system. Instead of having a static folder of files, the image consists of layers. Indeed, when running the file command over the sha256 directory, each layer is shown as a tar file, along with a JSON metadata file.

As the detailed layers are not necessary for analysis, a single command can be used to extract all of them into a single directory, recreating what the container file system would look like:

find blobs/sha256 -type f -exec sh -c 'file "{}" | grep -q "tar archive" && tar -xf "{}" -C root_dir' \;

The find command can then be used to quickly locate where the ten.py script is.

find root_dir -name ten.py

root_dir/app/ten.py

This may look complicated at first glance, however after breaking it down, it is fairly simple. The script defines a lambda function (effectively a variable that contains executable code) and runs zlib decompress on the output of base64 decode, which is run on the reversed input. The script then runs the lambda function with an input of the base64 string, and then passes it to exec, which runs the decoded string as Python code.

To help illustrate this, the code can be cleaned up to this simplified function:

def decode(input):

reversed = input[::-1]

decoded = base64.decode(reversed)

decompressed = zlib.decompress(decoded)

return decompressed

decoded_string = decode(the_big_text_blob)

exec(decoded_string) # run the decoded string

This can then be set up as a recipe in Cyberchef, an online tool for data manipulation, to decode it.

The decoded payload calls the decode function again and puts the output into exec. Copy and pasting the new payload into the input shows that it does this another time. Instead of copy-pasting the output into the input all day, a quick script can be used to decode this.

The script below uses the decode function from earlier in order to decode the base64 data and then uses some simple string manipulation to get to the next payload. The script will run this over and over until something interesting happens.

# Decode the initial base64

decoded = decode(initial)

# Remove the first 11 characters and last 3

# so we just have the next base64 string

clamped = decoded[11:-3]

for i in range(1, 100):

# Decode the new payload

decoded = decode(clamped)

# Print it with the current step so we

# can see what’s going on

print(f"Step {i}")

print(decoded)

# Fetch the next base64 string from the

# output, so the next loop iteration will

# decode it

clamped = decoded[11:-3]

After 63 iterations, the script returns actual code, accompanied by an error from the decode function as a stopping condition was never defined. It not clear what the attacker’s motive to perform so many layers of obfuscation was, as one round of obfuscation versus several likely would not make any meaningful difference to bypassing signature analysis. It’s possible this is an attempt to stop analysts or other hackers from reverse engineering the code. However, it took a matter of minutes to thwart their efforts.

Cryptojacking 2.0?

The cleaned up code indicates that the malware attempts to set up a connection to teneo[.]pro, which appears to belong to a Web3 startup company.

Teneo appears to be a legitimate company, with Crunchbase reporting that they have raised USD 3 million as part of their seed round [1]. Their service allows users to join a decentralized network, to “make sure their data benefits you” [2]. Practically, their node functions as a distributed social media scraper. In exchange for doing so, users are rewarded with “Teneo Points”, which are a private crypto token.

The malware script simply connects to the websocket and sends keep-alive pings in order to gain more points from Teneo and does not do any actual scraping. Based on the website, most of the rewards are gated behind the number of heartbeats performed, which is likely why this works [2].

Checking out the attacker’s dockerhub profile, this sort of attack seems to be their modus operandi. The most recent container runs an instance of the nexus network client, which is a project to perform distributed zero-knowledge compute tasks in exchange for cryptocurrency.

Typically, traditional cryptojacking attacks rely on using XMRig to directly mine cryptocurrency, however as XMRig is highly detected, attackers are shifting to alternative methods of generating crypto. Whether this is more profitable remains to be seen. There is not currently an easy way to determine the earnings of the attackers due to the more “closed” nature of the private tokens. Translating a user ID to a wallet address does not appear to be possible, and there is limited public information about the tokens themselves. For example, the Teneo token is listed as “preview only” on CoinGecko, with no price information available.

Conclusion

This blog explores an example of Python obfuscation and how to unravel it. Obfuscation remains a ubiquitous technique employed by the majority of malware to aid in detection/defense evasion and being able to de-obfuscate code is an important skill for analysts to possess.

We have also seen this new avenue of cryptominers being deployed, demonstrating that attackers’ techniques are still evolving - even tried and tested fields. The illegitimate use of legitimate tools to obtain rewards is an increasingly common vector. For example, as has been previously documented, 9hits has been used maliciously to earn rewards for the attack in a similar fashion.

Docker remains a highly targeted service, and system administrators need to take steps to ensure it is secure. In general, Docker should never be exposed to the wider internet unless absolutely necessary, and if it is necessary both authentication and firewalling should be employed to ensure only authorized users are able to access the service. Attacks happen every minute, and even leaving the service open for a short period of time may result in a serious compromise.

References

1. https://www.crunchbase.com/funding_round/teneo-protocol-seed--a8ff2ad4

![WHOIS Look records of the C2 endpoint vhs[.]delrosal[.]net.](https://cdn.prod.website-files.com/626ff4d25aca2edf4325ff97/69e6bd15391fbfb9bc810102_Screenshot%202026-04-20%20at%204.56.01%E2%80%AFPM.png)

![Earliest observed transaction record for blackice[.]sol on public ledgers.](https://cdn.prod.website-files.com/626ff4d25aca2edf4325ff97/69e6bd3a9035e7b16c8df83c_Screenshot%202026-04-20%20at%204.56.40%E2%80%AFPM.png)