Cloud Migration Expanding the Attack Surface

Cloud migration is here to stay – accelerated by pandemic lockdowns, there has been an ongoing increase in the use of public cloud services, and Gartner has forecasted worldwide public cloud spending to grow around 20%, or by almost USD 600 billion [1], in 2023. With more and more organizations utilizing cloud services and moving their operations to the cloud, there has also been a corresponding shift in malicious activity targeting cloud-based software and services, including Microsoft 365, a prominent and oft-used Software-as-a-Service (SaaS).

With the adoption and implementation of more SaaS products, the overall attack surface of an organization increases – this gives malicious actors additional opportunities to exploit and compromise a network, necessitating proper controls to be in place. This increased attack surface can leave organization’s open to cyber risks like cloud misconfigurations, supply chain attacks and zero-day vulnerabilities [2]. In order to achieve full visibility over cloud activity and prevent SaaS compromise, it is paramount for security teams to deploy sophisticated security measures that are able to learn an organization’s SaaS environment and detect suspicious activity at the earliest stage.

Darktrace Immediately Detects Hijacked Account

In May 2023, Darktrace observed a chain of suspicious SaaS activity on the network of a customer who was about to begin their trial of Darktrace/Apps™ and Darktrace/Email™. Despite being deployed on the network for less than a week, Darktrace DETECT™ recognized that the legitimate SaaS account, belonging to an executive at the organization, had been hijacked. Darktrace/Email was able to provide full visibility over inbound and outbound mail and identified that the compromised account was subsequently used to launch an internal spear-phishing campaign.

If Darktrace RESPOND™ were enabled in autonomous response mode at the time of this compromise, it would have been able to take swift preventative action to disrupt the account compromise and prevent the ensuing phishing attack.

Account Hijack Attack Overview

Unusual External Sources for SaaS Credentials

On May 9, 2023, Darktrace/Apps detected the first in a series of anomalous activities performed by a Microsoft 365 user account that was indicative of compromise, namely a failed login from an external IP address located in Virginia.

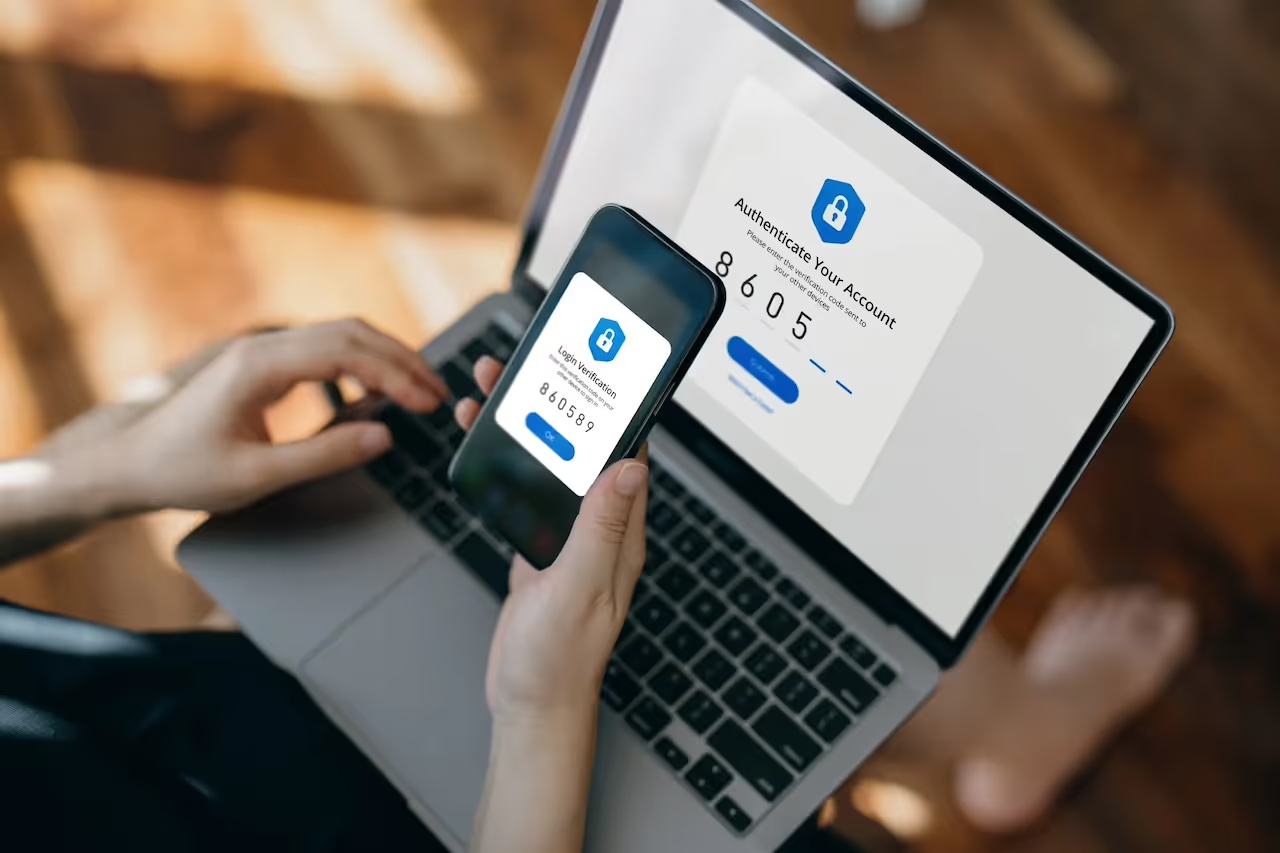

Just a few minutes later, Darktrace observed the same user credential being used to successfully login from the same unusual IP address, with multi-factor authentication (MFA) requirements satisfied.

A few hours after this, the user credential was once again used to login from a different city in the state of Virginia, with MFA requirements successfully met again. Around the time of this activity, the SaaS user account was also observed previewing various business-related files hosted on Microsoft SharePoint, behavior that, taken in isolation, did not appear to be out of the ordinary and could have represented legitimate activity.

The following day, May 10, however, there were additional login attempts observed from two different states within the US, namely Texas and Florida. Darktrace understood that this activity was extremely suspicious, as it was highly improbable that the legitimate user would be able to travel over 2,500 miles in such a short period of time. Both login attempts were successful and passed MFA requirements, suggesting that the malicious actor was employing techniques to bypass MFA. Such MFA bypass techniques could include inserting malicious infrastructure between the user and the application and intercepting user credentials and tokens, or by compromising browser cookies to bypass authentication controls [3]. There have also been high-profile cases in the recent years of legitimate users mistakenly (and perhaps even instinctively) accepting MFA prompts on their token or mobile device, believing it to be a legitimate process despite not having performed the login themselves.

New Email Rule

On the evening of May 10, following the successful logins from multiple US states, Darktrace observed the Microsoft 365 user creating a new inbox rule, named “.’, in Microsoft Outlook from an IP located in Florida. Threat actors are often observed naming new email rules with single characters, likely to evade detection, but also for the sake of expediency so as to not expend any additional time creating meaningful labels.

In this case the newly created email rules included several suspicious properties, including ‘AlwaysDeleteOutlookRulesBlob’, ‘StopProcessingRules’ and “MoveToFolder”.

Firstly, ‘AlwaysDeleteOutlookRulesBlob’ suppresses or hides warning messages that typically appear if modifications to email rules are made [4]. In this case, it is likely the malicious actor was attempting to implement this property to obfuscate the creation of new email rules.

The ‘StopProcessingRules’ rule meant that any subsequent email rules created by the legitimate user would be overridden by the email rule created by the malicious actor [5]. Finally, the implementation of “MoveToFolder” would allow the malicious actor to automatically move all outgoing emails from the “Sent” folder to the “Deleted Items” folder, for example, further obfuscating their malicious activities [6]. The utilization of these email rule properties is frequently observed during account hijackings as it allows attackers to delete and/or forward key emails, delete evidence of exploitation and launch phishing campaigns [7].

In this incident, the new email rule would likely have enabled the malicious actor to evade the detection of traditional security measures and achieve greater persistence using the Microsoft 365 account.

Account Update

A few hours after the creation of the new email rule, Darktrace observed the threat actor successfully changing the Microsoft 365 user’s account password, this time from a new IP address in Texas. As a result of this action, the attacker would have locked out the legitimate user, effectively gaining full access over the SaaS account.

Phishing Emails

The compromised SaaS account was then observed sending a high volume of suspicious emails to both internal and external email addresses. Darktrace was able to identify that the emails attempting to impersonate the legitimate service DocuSign and contained a malicious link prompting users to click on the text “Review Document”. Upon clicking this link, users would be redirected to a site hosted on Adobe Express, namely hxxps://express.adobe[.]com/page/A9ZKVObdXhN4p/.

Adobe Express is a free service that allows users to create web pages which can be hosted and shared publicly; it is likely that the threat actor here leveraged the service to use in their phishing campaign. When clicked, such links could result in a device unwittingly downloading malware hosted on the site, or direct unsuspecting users to a spoofed login page attempting to harvest user credentials by imitating legitimate companies like Microsoft.

The malicious site hosted on Adobe Express was subsequently taken down by Adobe, possibly in response to user reports of maliciousness. Unfortunately though, platforms like this that offer free webhosting services can easily and repeatedly be abused by malicious actors. Simply by creating new pages hosted on different IP addresses, actors are able to continue to carry out such phishing attacks against unsuspecting users.

In addition to the suspicious SaaS and email activity that took place between May 9 and May 10, Darktrace/Email also detected the compromised account sending and receiving suspicious emails starting on May 4, just two days after Darktrace’s initial deployment on the customer’s environment. It is probable that the SaaS account was compromised around this time, or even prior to Darktrace’s deployment on May 2, likely via a phishing and credential harvesting campaign similar to the one detailed above.

Darktrace Coverage

As the customer was soon to begin their trial period, Darktrace RESPOND was set in “human confirmation” mode, meaning that any preventative RESPOND actions required manual application by the customer’s security team.

If Darktrace RESPOND had been enabled in autonomous response mode during this incident, it would have taken swift mitigative action by logging the suspicious user out of the SaaS account and disabling the account for a defined period of time, in doing so disrupting the attack at the earliest possible stage and giving the customer the necessary time to perform remediation steps. As it was, however, these RESPOND actions were suggested to the customer’s security team for them to manually apply.

Nevertheless, with Darktrace/Apps in place, visibility over the anomalous cloud-based activities was significantly increased, enabling the swift identification of the chain of suspicious activities involved in this compromise.

In this case, the prospective customer reached out to Darktrace directly through the Ask the Expert (ATE) service. Darktrace’s expert analyst team then conducted a timely and comprehensive investigation into the suspicious activity surrounding this SaaS compromise, and shared these findings with the customer’s security team.

Conclusion

Ultimately, this example of SaaS account compromise highlights Darktrace’s unique ability to learn an organization’s digital environment and recognize activity that is deemed to be unexpected, within a matter of days.

Due to the lack of obvious or known indicators of compromise (IoCs) associated with the malicious activity in this incident, this account hijack would likely have gone unnoticed by traditional security tools that rely on a rules and signatures-based approach to threat detection. However, Darktrace’s Self-Learning AI enables it to detect the subtle deviations in a device’s behavior that could be indicative of an ongoing compromise.

Despite being newly deployed on a prospective customer’s network, Darktrace DETECT was able to identify unusual login attempts from geographically improbable locations, suspicious email rule updates, password changes, as well as the subsequent mounting of a phishing campaign, all before the customer’s trial of Darktrace had even begun.

When enabled in autonomous response mode, Darktrace RESPOND would be able to take swift preventative action against such activity as soon as it is detected, effectively shutting down the compromise and mitigating any subsequent phishing attacks.

With the full deployment of Darktrace’s suite of products, including Darktrace/Apps and Darktrace/Email, customers can rest assured their critical data and systems are protected, even in the case of hybrid and multi-cloud environments.

Credit: Samuel Wee, Senior Analyst Consultant & Model Developer

Appendices

References

[2] https://www.upguard.com/blog/saas-security-risks

[4] https://learn.microsoft.com/en-us/powershell/module/exchange/disable-inboxrule?view=exchange-ps

[7] https://blog.knowbe4.com/check-your-email-rules-for-maliciousness

Darktrace Model Detections

Darktrace DETECT and RESPOND Models Breached:

SaaS / Access / Unusual External Source for SaaS Credential Use

SaaS / Unusual Activity / Multiple Unusual External Sources for SaaS Credential

Antigena / SaaS / Antigena Unusual Activity Block (RESPOND Model)

SaaS / Compliance / New Email Rule

Antigena / SaaS / Antigena Significant Compliance Activity Block

SaaS / Compromise / Unusual Login and New Email Rule (Enhanced Monitoring Model)

Antigena / SaaS / Antigena Suspicious SaaS Activity Block (RESPOND Model)

SaaS / Compromise / SaaS Anomaly Following Anomalous Login (Enhanced Monitoring Model)

SaaS / Compromise / Unusual Login and Account Update

Antigena / SaaS / Antigena Suspicious SaaS Activity Block (RESPOND Model)

IoC – Type – Description & Confidence

hxxps://express.adobe[.]com/page/A9ZKVObdXhN4p/ - Domain – Probable Phishing Page (Now Defunct)

37.19.221[.]142 – IP Address – Unusual Login Source

35.174.4[.]92 – IP Address – Unusual Login Source

MITRE ATT&CK Mapping

Tactic - Techniques

INITIAL ACCESS, PRIVILEGE ESCALATION, DEFENSE EVASION, PERSISTENCE

T1078.004 – Cloud Accounts

DISCOVERY

T1538 – Cloud Service Dashboards

CREDENTIAL ACCESS

T1539 – Steal Web Session Cookie

RESOURCE DEVELOPMENT

T1586 – Compromise Accounts

PERSISTENCE

T1137.005 – Outlook Rules

%201.png)