Despite being widely recognized as a serious threat for a number of years, ransomware continues to persist. The total global cost of this threat vector is projected to reach $20 billion by 2021. With this level of financial return for attackers, it is no wonder that they continue to develop new strains of ransomware and advance their techniques to bypass security tools and ensure their campaigns are successful.

In the last few weeks, Darktrace’s AI has detected an attacker abusing off-the-shelf products to deploy ransomware at an African retailer, along with high-profile WastedLocker and Emotet attacks. Here, we look at Eking ransomware – a variant of the Phobos ransomware family – that targeted a government organization in the APAC region.

This attack was likely an example of Ransomware-as-a-Service (RaaS); a particularly concerning threat for security teams as it allows lower-level actors to get hold of sophisticated malware. This blog post breaks down Eking ransomware in detail, showing how Cyber AI enabled the defenders to recognize the anomalous behavior as soon as it occurred and stop the threat from advancing – and causing damage. It also shows how Darktrace’s Cyber AI Analyst autonomously investigated the broader security incident, generating an easy-to-understand and actionable report as the activity unfolded.

An overview of the attack

An internal server was infected with Eking ransomware via an attack vector outside of Darktrace’s visibility, most likely an employee clicking a malicious link within an email. Antigena Email would likely have identified suspicious characteristics of the email and stopped it from reaching employees’ inboxes, preventing the threat at the first hurdle. However, in this instance, the customer had only deployed Cyber AI across their network. This still enabled Darktrace’s Immune System to identify lateral movement and encryption activity indicative of ransomware.

The infected device began engaging in internal reconnaissance activity on a single internal subnet. This included SMB enumeration via the SRVSVC and winreg pipes, as well as extensive scanning over 10 commonly exploited ports. Indicators of Nmap were also detected during this phase of the attack.

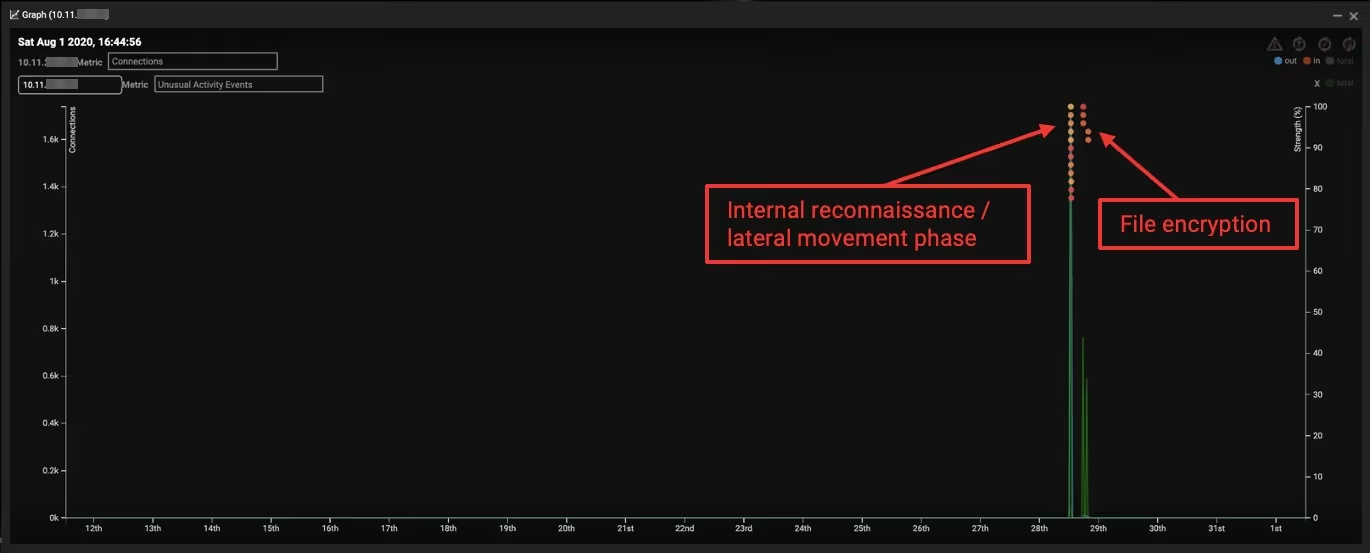

About four and a half hours after this scanning concluded, the infected server began encrypting files on a second server. The device transitioned from making just a few internal connections per day to making thousands in less than an hour. This dramatic shift in behavior was immediately detected by Darktrace’s AI as highly threatening and the Cyber AI Analyst began autonomously investigating.

Figure 1: An overview of events

Internal reconnaissance and encryption – sometimes referred to as detonation – took place late at night local time. This may have been strategic on the part of the attackers, as the number of security professionals actively monitoring the network was probably lower, slowing the speed of the organization’s response. Endpoint defenses did not prevent the threat – likely indicating that this was a slightly modified strain of the Eking ransomware that was able to bypass these signature-based tools.

While Darktrace provides complete coverage across email, IoT, and cloud environments, business challenges or segmentation sometimes prevent security teams from obtaining full visibility across their organization. However, even when working with imperfect data and suboptimal coverage, Cyber AI still identified this threat as it emerged.

AI Analyst coverage

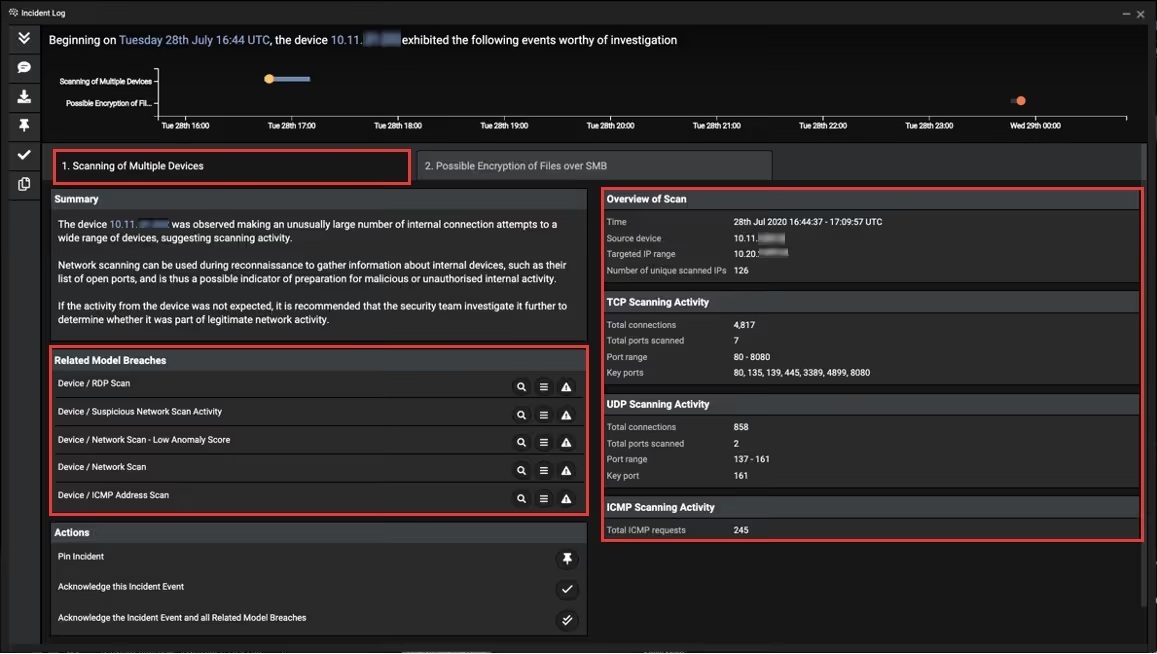

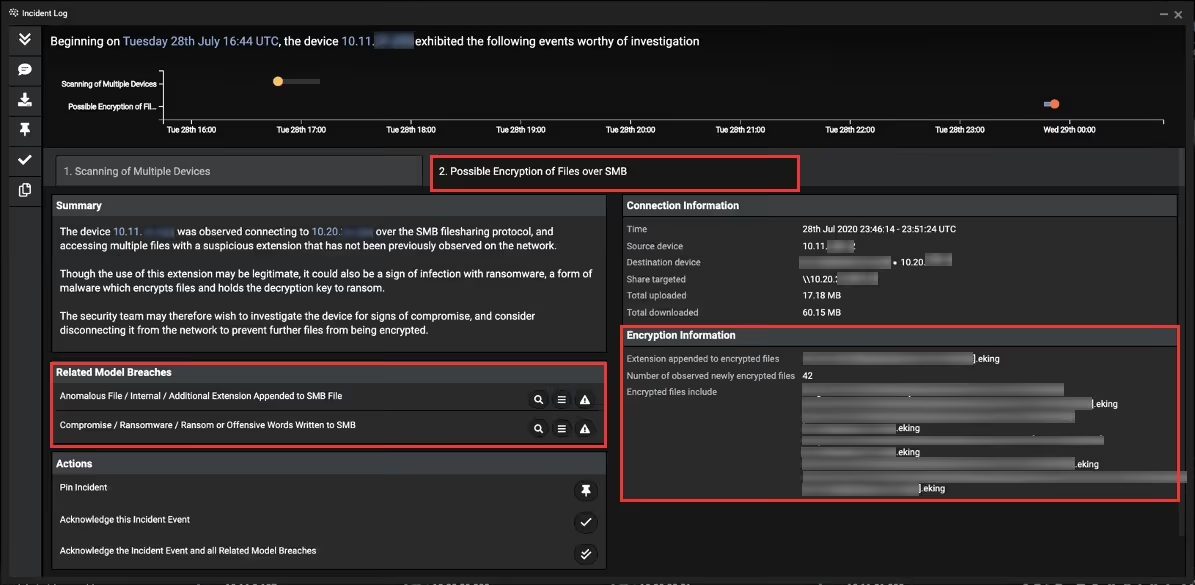

When the first model breach occurred, this triggered Darktrace’s Cyber AI Analyst to launch a real-time investigation into the events as they unfolded. Piecing together the lateral movement and the later encryption, the technology recognized that these separate events were part of a wider security narrative. It surfaced an incident summary and several key metrics for the security team to review and action a response.

Figure 2: Internal reconnaissance of the subnet over a number of sensitive ports

Figure 3: Encryption phase of the attack

Figure 4: A graph of connections and unusual activity demonstrating how significant of a deviation this activity was from normal device behavior

Off the shelf: The commercialization of cyber-crime

This incident demonstrates how the rise in Ransomware-as-a-Service is allowing lower-level threat actors to access sophisticated strains of ransomware as well as novel variants of well-known attacks. The cyber-crime market is estimated to be worth $1.6 billion, and this figure is only likely to rise as the relatively new ‘industry’ matures. As a result, the potential perpetrators of advanced cyber-attacks like the one detailed above are no longer confined to professional cyber-criminal rings, who have outsourced their tactics, techniques and procedures to a wider range of threat actors willing to pay the right price. As lower-level threat actors get access, more organizations will find themselves targeted by increasingly sophisticated threats.

Just this week, Darktrace observed a high-profile example of RaaS in a Sodinokibi ransomware attack that hit a retail organization in the US. The infected device engaged in anomalous administrative activities before writing an unusual executable file, sharing it with other internal locations and then encrypting multiple files on the network and writing its own ransom note files.

With ransomware attacks continuing to target organizations large and small, security teams are fundamentally changing their approach to cyber defense, turning to artificial intelligence to stop attacks that other tools miss. Without relying on pre-defined rules and signatures, Cyber AI learns a sense of ‘self’ for a unique organization to detect and respond to anomalous activity as soon as it occurs.

Fight back with Autonomous Response

Threat actors know that deploying ransomware at weekends or at night is more likely to succeed because an organization’s response time is typically slower. Darktrace’s Autonomous Response operates around the clock, taking a targeted and proportionate response to contain malicious activity wherever it occurs, whether in the network, email, or in cloud and SaaS applications.

Had Darktrace Antigena been deployed at this government in APAC, it would have taken action at the first stage of the attack – as the initial scanning took place – and prevented the malware from ever reaching the encryption stage. However, in this case, when the security team returned to the office the next morning, they were still able to act faster than they otherwise would have and limit the damage, thanks to the fully-investigated incident and actionable intelligence of the Cyber AI Analyst’s AI-powered investigations.

Thanks to Darktrace analyst Brian Evans for his insights on the above threat find.

Learn more about Autonomous Response

IoCs:

IoCComment.ekingEking encryption extension

Darktrace model detections:

- Device / ICMP Address Scan

- Unusual Activity / Unusual Internal Connections

- Device / Network Scan - Low Anomaly Score

- Device / Network Scan

- Anomalous Connection / Unusual Internal Remote Desktop

- Device / RDP Scan

- Device / Suspicious Network Scan Activity

- Anomalous Connection / SMB Enumeration

- Anomalous Connection / Unusual Admin RDP Session

- Device / Multiple Lateral Movement Model Breaches

- Compromise / Ransomware / Suspicious SMB Activity

- Compromise / Ransomware / Ransom or Offensive Words Written to SMB

- Anomalous File / Internal / Additional Extension Appended to SMB File

%201.png)